The Line Between Deepfake, Satire, and Propaganda Is Disappearing in Real Time

What's happening around the Iran conflict is not an isolated media glitch. It's a live information war playing out through feeds, reposts, and synthetic imagery that spreads before anyone can check it.

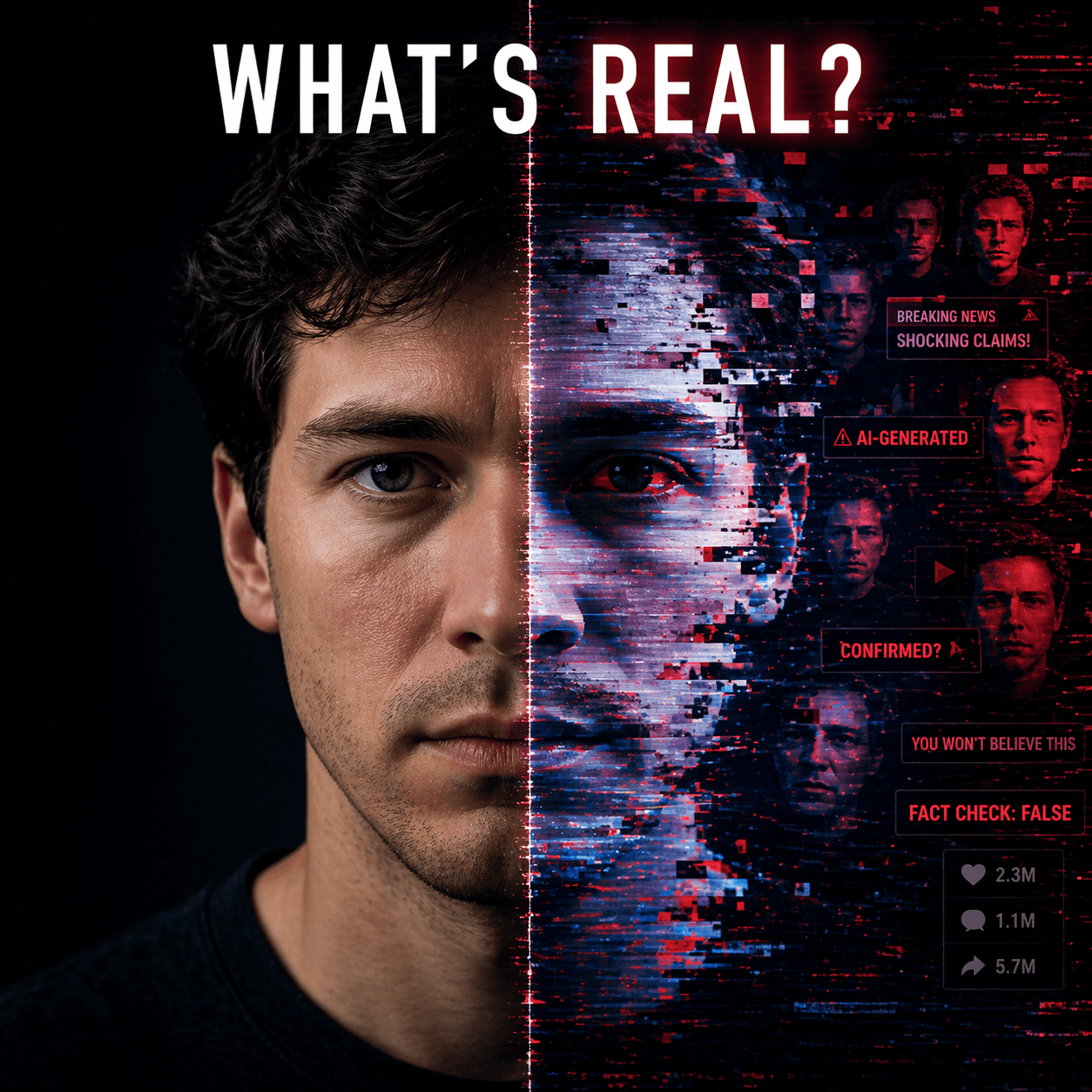

AI-generated memes, propaganda, deepfakes, satire, disinformation — all of it is starting to collapse into the same visual language during live conflict. That is what makes this so dangerous. It is not just that false material exists. It is that the line between joke, manipulation, narrative warfare, and viral emotional bait is getting harder and harder to see in real time. And once that line disappears for the average person, the platforms distributing it become part of the problem whether they admit it or not.

What is happening around the Iran conflict is not some isolated media glitch. It is a live information war playing out through feeds, reposts, clips, and synthetic imagery that spreads before anybody can properly check it. Platform executives already seem to understand where this goes. They are on record acknowledging that identifying AI-generated content gets harder over time, not easier. That is the real headline. If the people running the distribution systems already know they are losing the detection war, then this is no longer a moderation issue. It is an infrastructure issue.

That is where I think people still miss the point. The answer is not just more criticism of the media or more complaining about bad content. The answer has to be provenance, accountability, and verified origin. Who said it. Where did it come from. Can a real human be tied to it. Can that human be held accountable for what they put into the system. If not, then all we are really doing is letting synthetic narratives run around pretending to be public discourse.

The Venezuela examples, the fabricated Bezos quote tied to prediction markets, the war-related deepfakes — all of that points in one direction. The noise is getting stronger, faster, and more believable. And when emotional engagement is the primary sorting system of the internet, propaganda and satire can end up performing almost identically even if their intentions are different. That is a brutal reality.

For a founder trying to build a credible media system in this environment, the problem actually becomes clearer, not fuzzier. The black and white part of the story is easier to see now. Viral misinformation tied to bot systems, automation, and synthetic media is not shrinking. It is accelerating. So I am not trying to avoid that reality. I am steering directly into it and building for it.

Because if the only thing left that survives the detection arms race is verified human origin, then that becomes the scarce asset. And that is exactly where the future media stack has to be built.

The distribution systems are already losing the detection war. That's the real headline.

Platform executives are on record acknowledging that identifying AI-generated content gets harder over time, not easier. When the people running the systems say that, this stops being a moderation issue and becomes an infrastructure issue.

The answer is not more criticism. It is provenance, accountability, and verified origin. Who said it. Where it came from. Can a real human be held accountable for it. If not, synthetic narratives run as public discourse.